EARLY DEVELOPMENTS | 1 |

From mechanical machines to large-scale electronic devices.

|

| |||

|

|  | |

|

At the beginning of the 20th century, numerous coin-operated games gained popularity. In 1930, a game called BAFFLE BALL was manufactured by Gottlieb & Company which would become the predecessor of all modern pinball games – a term coined only in 1936. Along with slot machines, these devices pioneered in delivering artificially generated sounds, whereas all prior machines had been mostly mechanical in nature (e.g. table-football games) and were hardly producing any sounds – or rather noises – other than those caused by the sheer operation of the controls by the players or ball collisions. For the first time, the player was intentionally provided with auditory feedback about what was going on in the game. This machine marked the first major milestone in the modern era of gambling. The fact that it created sounds in response to the in-game action undoubtedly contributed to the vast success of the game, though certainly on a somewhat subconscious level rather than in an obvious and direct manner.

The original concept of the game was improved in 1932, when Mr. Harry Williams introduced the »tilt« mechanism to make the game more challenging – the player controls would be temporarily disabled if the machine had been nudged too often or too hard. The same man also created the first electric pinball game called OBSERVER in 1933. In the years to come pinball machines would take off rapidly with entertainment centers full of them being erected almost everywhere. On January 21st, 1946, Mayor Fiorello Henry LaGuardia banished pinball machines in New York City as they were being seen by many as games of luck and chance. In 1947, a pinball game called HUMPTY DUMPTY was created by an engineer of Gottlieb & Co., Mr. Harry Mabs, which featured six spring-powered levers to aim for higher-scoring targets on the playfield. This invention was called »flipper bumpers« by Gottlieb (hence the European name »flipper« for pinball games) and allowed the company to petition that pinball was more of a game of skill than of chance. However, it was not until 1976 that the New York ban was lifted, when Roger Sharpe, a writer for Esquire Magazine, was able to demonstrate to the City Council the ability to drop balls down any preselected lane at the top of a pinball machine by adjusting the way he was pulling back the plunger.

Through the years, pinball machines were enhanced with all sorts of electronical and mechanical gadgets to create new attractions for players and keep them interested. Their basic principles of operation, however, have remained largely unaltered for the past 70 years. Even today, pinball machines are commonplace in gambling locations all over the world.

|

| |||

|

|  | |

|

In the early 1950s, a young man named Ralph Baer, a German-born engineer at Loral corporation, was instructed to build the “best TV-set in the world”. He suggested that some kind of interactive game should be included into the device as a genuine feature that clearly distinguishes the product from other companies' TVs, but the management ignored the idea. It would take another 18 years for his vision to become a reality.

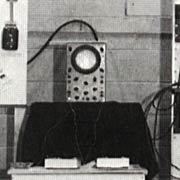

The first working model and ancestor of what we now call Video Games was created in 1958 by William “Willy” A. Higinbotham. The game had been created in order to keep visitors to the Brookhaven National Laboratories in New York from being bored and was exposed there for almost two years. His tennis-type game design was using analogue electronics based on vacuum tubes and an oscilloscope display. Although no detailed records seem to have survived, the game was very probably silent. Believing that he had not invented anything of importance, Higinbotham never sought patent for the device.

|

| |||

|

Recommended Reading: |  | |

|

The real breakthrough came in 1961, when three young students at the MIT (Wayne Witanen, J. Martin Graetz and Steve “Slug” Russel) tried to figure out a cool demonstration for the all new and shiny PDP-1 (Programmed Data Processor-1) computer manufactured by Digital Equipment Corporation that was due to arrive at the department a few weeks later. Being SciFi-addicts and keen followers of the American space program they quickly came up with the idea of an interactive space simulation of some kind – a game. Thus, SPACEWAR! was conceived. There had been some graphic demonstration programs before, namely »Bouncing Ball« that ran on MIT's Whirlwind Mainframe or »HAX«, which displayed changing patterns according to settings of console switches and was designed for Whirlwind's successor, the TX-0. Both even had primitive sound support, i.e. timed beeps generated by the console speaker. Prior to these computers hardly any mainframes featured CRT displays, communication was mostly handled through punched cards (input) and Teletype printers (output).

| |||

|

|  | |

|

Still before the PDP-1 actually arrived, the details of the game had been worked out and refined. There would be at least two spaceships, each controlled by a set of console switches. The ships would have a supply of rocket fuel and some sort of a weapon: a ray or beam or possibly a missile. And for really hopeless situations, there should be panic button triggering a »Hyperspace« jump. The group had even given themselves a name which rather accurately described what they were doing: The Hingham Institute Study Group On Space Warfare. When the computer was finally delivered in fall 1961, work immediately commenced. Since the machine came with nothing more than a few diagnostic and utility programs, the MACRO assembler and FLIT debugger that had been developed and used on the TX-0 had to be ported to the PDP-1 in order to conveniently and efficiently create programs for the new machine. After Alan Kotok had supplied a sine-cosine routine directly from DEC, Steve Russel got the first object-in-motion program running in January 1962.

| |||

|

|  | |

|

By February there was a working crude version of the game that featured two different spaceships with a supply of fuel and some torpedoes, as well as a random-star background. Upon seeing this early version, Peter Samson was reportedly “offended” by Russel's random stars an went on to create a program called »Expensive Planetarium« based on actual star constellations. The display could remain fixed or move gradually from right to left. Samson even incorporated correct levels of brightness of the different stars by activating certain dots more often than others. Being a nice demonstration of its own, the program was duly admired and integrated into Spacewar at once. Another important contribution to the game came from Dan Edwards, who was bored by the two plain spaceships and suggested that gravity should be introduced. With Russell pleading innocence of numerical analysis, he also provided the necessary calculations.

The game was essentially complete by the end of April 1962. So many features had been incorporated into the game that it already pushed the limits of the PDP-1's processing capabilities and memory size of only 4096 words of 18 bits lengths (9 KBytes). Thus, although sound support had originally been planned it was never included in the original game in favour for other features that were deemed much cooler and more important at the time. Indeed, the remaining resources were so limited that gravitational calculations could not be extended to the torpedoes, which resulted in some interesting effects and game tactics. As one of the last additions a scoring facility was added so that finite matches could be played, at the same time making it easier to limit the time any one person spent on the control. The game was presented in May 1962 at MIT's annual Science Open House using a second, much larger CRT display.

Since computers were so ridiculously expensive in those days, Russel and his friends could not think of a way to commercialize what they had created and left the game and its source code to be copied freely by anyone who was interested. The group was not aware of it at the time, but they had created the very first open-source game. Quickly the game spread to other universities and institutions and not before long almost every single PDP-1 in existance (about 50 machines total) had its own copy of SPACEWAR! up and running. DEC even started shipping the game as “diagnostic utility” with all their new computers. Thanks to its addictive nature, many students who had not had a keen interest in computers before were suddently flocking to the machines and wanted to learn more about them, especially how to write programs. Over the years, many improvements and extensions were programmed into the game by enthusiasts from all over the world creating hundreds of different versions. The game was ported to other machines and served as starting point for completely different games.

THE FIRST MACHINES | 2 |

|

| |||

|

|  | |

|

When Nolan Bushnell played SPACEWAR! at the University of Utah in 1962 he became completely obsessed with it, instantly realizing the future commercial possibilities – an encounter that should shape the future of video games forever. Assisted by his friend Ted Dabney, Nolan Bushnell turned his daughter's bedroom into a workshop to design an arcade version of SPACEWAR! in 1970. After only a few months they had created a dedicated hardwired machine that could hook up to a TV set.

| |||

|

Excerpt from the original COMPUTER SPACE leaflet: |  | |

|

The arcade game manufacturer Nutting Associates purchased the game which they had titled COMPUTER SPACE. Bushnell was hired to oversee the production of the game. As a result, Nutting Associates released the very first arcade video game just one year later, in 1971. Two different versions, a single player and a two player machine existed. About 1500 units were built, but most people found the game too difficult to play. Though not giving any detailed information on the sound system, the brief manual that accompanied the game offers a few lines on the topic (see sidebar). The built-in speaker of the conventional TV set was probably used to reproduce the sound effects of COMPUTER SPACE.

| |||

|

|  | |

|

At the same time two Stanford students, Bill Pitts and his friend Hugh Tuck, founded a small company which they called »Computer Recreations«. They had realized that with the advent of the moderately priced DEC PDP-11 computer they could create an arcade version of their favourite game: SPACEWAR. In September 1971 they installed the first version of their GALAXY GAME in a Stanford student union and students lined up with dimes in their hand to play the game. However, at a cost of about $20.000 it would take quite a lot of quarters for the game to be a profitable venture. The two went back to work and designed an updated version with one computer driving either four or eight display consoles. This system was installed in June 1972 and remained in constant use until May 1979 – probably setting a record in the video game industry. However, the system never caught on commercially and remained a local phenomenon only. This also explains why so little information is available on GALAXY GAME today – hence, its sound capabilities remain unclear.

|

| |||

|

|  | |

|

In 1966, Ralph Baer rekindled his idea of an interactive TV game. This time, his new employer, defense contractor Sanders Associates, showed some interest. By 1967, Baer and his team had developed a hockey game that was quickly followed by a tennis-like game. They created an early prototype, the legendary “Brown Box”, which is still existing and playable today. The invention was patented in 1968. After having unsuccessfully offered the game to several companies, Baer sold his game to Magnavox (a U.S. subsidiary of PHILIPS) in 1970.

Based on Baer's original design, the first home video game called ODYSSEY was announced by Magnavox in 1972 and put on display at a convention in Burlingame, CA, on May 24. The game featured strictly analog electronics consisting entirely of 40 transistors and 40 diodes with no costly integrated circuits. It offered players an assortment of a dozen varieties of tennis, hockey and maze games and was attractively priced at $100. Each game variation required a special plastic overlay placed over the TV screen to lend color and dimension to an otherwise simplistic game system – the players even had to keep track of their own scores, since there was no built-in counter. The original ODYSSEY did not support sound at all, even though this feature was incorporated into later versions. In a key-note to the Classic Gaming Expo 2000, Ralph Baer explained this fact:

“PONG [see below] was made with integrated circuits... of course, the machine sold for about $1000. He [Bushnell] could afford to do things that we never could afford to do, like scoring, and sounds, which we could afford to do but didn't, since Magnavox didn't want to do it.”

Caught by surprise, Nutting Associates sent Nolan Bushnell to inspect the game at the Burlingame convention, believing their company was the only one dealing with video games. After spending a few hours playing video tennis and other games on ODYSSEY, Bushnell reported back to Nutting stating the he had found the game uninteresting and considered it no competition for COMPUTER SPACE. Despite the fact that early Magnavox commercials gave the impression that ODYSSEY would only work with a Magnavox TV set, more than 100,000 units were sold within the first year of its release making it a huge success.

|

| |||

|

|  | |

|

On the other hand, the rather meagre sales of COMPUTER SPACE had made the game a commercial failure. Bushnell was forced to realize that it was indeed too complicated to play and had come to the conclusion that a much simpler design might be a major draw. Sharing these ideas with Nutting, they approved of a new design upon which Bushnell – considering himself the brains behind the whole video game business – demanded a third of the company. When Nutting rejected this claim, Bushnell left the company and went on to establish his own business. Thus, on June 27, 1972, Nolan Bushnell and Ted Dabney incorporated their own company: ATARI. To establish a business base, they started installing and servicing pinball machines. Al Alcorn was hired as game programmer by Bushnell and asked to create a simple video tennis as an exercise. The game was completed in September and given the name PONG for two reasons: 1) in the game, when the ball hits the paddle or bounces of the wall a »pong« sound is created and, 2) the name PING-PONG was already copyrighted. For testing purposes, the game was equipped with a coin drop and installed on top of a barrel in a local bar. After only two weeks, the bar owner complained to Alcorn in a midnight call that the game had broken down. When Alcorn checked the machine, he found a most unusual problem: so many quarters had been jammed into the coin box that the game had ceased to function. The rest is history: PONG took the arcade game halls by storm.

By the end of 1973, more than 25 companies were producing games similar to PONG. Fearing that this competition would hurt ATARI, Ted Dabney sold his half of the company to Bushnell. After discovering Bushnell's entry to the guestbook of the 1972 Burlingame convention, Sanders and Magnavox sued ATARI for having copied the idea for PONG from their ODYSSEY game, causing the first video game lawsuit. As a result, ATARI paid $700,000 to Magnavox for the right to manufacture video games. All other manufacturers of video games had to pay license fees to Magnavox and Sanders.

|

| ||||

|

|  | ||

|

After its huge success in arcades, a home version of PONG was developed in 1974, but initially no major retailer wanted to carry the device due to the unsatisfactory sales of the Magnavox ODYSSEY at that time. In 1975, Tom Quinn, a sporting-goods buyer for Sears, Roebuck, offered to buy all HOME PONGs that ATARI could manufacture to be sold under their own brand name »Tele-Games« exclusively through Sears outlets. He urged ATARI to double their production to 150,000 units and provided the necessary financing. By fall, Sears had already pre-sold all the games ATARI could manufacture. PONG became the hottest game of the 1975 christmas season. Several enhanced versions were built by ATARI in the following years, dozens of other companies built clones and variants of PONG. A whole new industry was born and soon new concepts and ideas for more sophisticated electronic toys emerged, with the products being readily absorbed by the market.

As an interesting side-note, the sound sample to the right was taken from a much later and presumably relatively rare PONG-variant, the “Bildschirmspiel 01”, manufactured in the former GDR around 1986, which I was lucky to inherit from an uncle of mine.

GOLDEN YEARS | 3 |

At this point, video games had left their infancy behind and many different types of games started to appear out of a sudden.

One of the first documented incidents pertaining to the successful application of sound in an electronic game is illustrated by the following episode:

|

| |||

|  |  | |

|

In 1972, ATARI released a game called TOUCH ME, with four randomly blinking buttons, which the player had to press in the order they lit up. The game was a complete failure. Ralph Baer, upon looking at the game, decided to redo it with random tones accompanying the random lights. He sold the idea to Milton Bradley (MB), who released the product under the name SIMON. Thanks to the added sound, the game went on to become one of the most successful handheld games of all time.

|

| ||||||||||

|

|  | ||||||||

| ||||||||||

The first programmable console, the legendary ATARI VCS (Video Computer System, later known as ATARI 2600) was released in 1977 at the reasonable price of $200. Since the system was cartridge-based, it was possible to create many different games, all using the same hardware. The device was powered by an 8-bit Motorola 6507 CPU running at 1.19MHz, graphics and sound output was realized through a single computer chip called “Stella” or TIA (Television Interface Adapter). The Stella chip incorporated two audio circuits that could be programmed independently. The sound output was controled via three registers:

- AUDC0, a 4-bit register that could be loaded with the values 0..15 resulting in 16 different basic sounds, ranging from flute-like, pure tones to explosive noises.

- AUDF0, a 5-bit register, was used to divide a basic frequency of 30kHz by 1..32

- AUDV0, another a 4-bit register, affected the volume of the sound in 16 steps.

With these technical details in mind it becomes clear that creating conventional music on the ATARI 2600 was not an easy task. The pitches that could be generated using the frequency-division method did not correspond to the well-tempered tuning of instruments that is traditionally used in contemporary western music. However, the quarter and eighth tones obtained by this method were very well suited to create tunes closely resembling Indian or Arabic traditional music. A music example from the game ACID DROP (see sidebar) demonstrates how poorly conventional music translated to the VCS, which is why music was ultimately used only in a small percentage of the hundreds of games developed throughout the years.

|

While sales in the domestic sector were developing extremely well, the arcade business was not forgotten. In 1978, ATARI created FOOTBALL using a new trackball controller. The game became a huge success, only to be surpassed by Taito's SPACE INVADERS, released by Midway, which hit the arcades later that year. Within six months it became so popular that it caused school truancy in the U.S. and coin shortages in Japan. For the first time, the game displayed a highscore, encouraging players to beat that mark and causing them to play longer and more often than they had used to with other games. Also, SPACE INVADERS would become the first game to emerge from bars and arcades and get into environments such as restaurant, pizza-parlors and ice cream shops. In Japan, a large part of the formerly omnipresent Pachinko-machines had to make way for the new alien-shooter phenomenon, whereas in the U.S. conservative adults were becoming more and more concerned that the game “soured the minds of their youngsters”. Residents of Mesquite, Texas pushed the issue all the way to the Supreme Court in their efforts to ban the illicit machines from their Bible-belt community.

One year later, in 1980, ATARI presented another arcade game that should become their all-time best seller with more than 70,000 units sold: ASTEROIDS. Instead of a standard TV-like raster-scan display, the game was equipped with a vector display, very similar to an oscilloscope, causing lines to appear extremely smooth and sharp in contrast to the jagged appearance that was common to most other games. In addition, Howard Delman, who was responsible for the game's intense sound system, had to design individual hardware circuits for each type of sound (such as explosions, and the background “heartbeat” that ASTEROIDS was famous for) since at the time no such thing as an all-purpose sound chip existed. As unique feature of ASTEROIDS, players could enter their three-character initials at the end of the game which would be displayed in a high-score list.

With BATTLEZONE the first arcade game using a three-dimensional first-person perspective was created by ATARI in 1980. The game became quite popular, and received so much acclaim that a modified version was ordered by the military with a more realistic depiction of events and life-like controllers for training of U.S. Army gunners.

When ATARI released the home version of SPACE INVADERS in 1980, sales of the VCS console skyrocketed. Many people bought a VCS just to play the famous arcade hit at their homes. By mid 1981, more than one million copies of the game had been sold. The same year, many programmers left ATARI for their refusal to give proper credit to the developers inside the games they had created or on the game packages. They created their own company, Activision, and started to write games for the VCS. Motivated by the fact that they were now programming for their own good and with a proper share of profit, many games produced by Activision would turn out superior in quality and gameplay than anything the VCS had seen to date.

|

| ||||||||||||||||||||||

|

|  | ||||||||||||||||||||

| ||||||||||||||||||||||

In 1980, the first real contender to the VCS was launched by Mattel Electronics. Their device was called INTELLIVISION (from Intelligent Television) and retailed for $299. It was technologically superior to the ATARI 2600 and marketed toward the technophile and ‘intellectual’ game players, which was reflected by their masterpiece Theatre-like ads with George Plimpton. More than one million units had been sold by the end of 1982.

The INTELLIVISION was powered by a General Instruments [GI] CP1610 processor running at roughly 900kHz, graphics was handled by a GI AY-3-8900-1 processor that was capable of producing a display resolution of 160x96 pixels in 16 fixed colours (eight “primaries” and eight “pastels”). The sound chip used in Mattel's console was a GI AY-3-8914. It could create three separate channels of sound (voices), each of which could be individually controlled for frequency, as well as volume or envelope (attack/decay patterns) respectively. In addition to the three voices, a noise channel was provided that could be coarsely controlled for frequency and added to the output, when desired. All channels were downmixed to a single mono signal for output to the TV. The pitch of a given voice was obtained via the frequency-division method, exactly as with the ATARI “Stella” chip, with the important difference that a 12-bit (8+4) register was used to set the divisor, allowing for 4096 possibilities instead of only 32 in the ATARI 2600. This finally allowed for pitch-accurate reproduction of music (see, or rather listen to example in sidebar). The GI AY-3-8914 sound chip and its derivatives were used later in a number of other computer systems, namely Sinclair ZX Spectrum, Amstrad CPC, Sega Master System and ATARI ST.

A very interesting quality of the INTELLIVISION was its modular design, allowing for all kinds of extensions. One such periphal was a Keyboard Component, that, combined with another module, the Entertainment Computer System, should turn the device into a fully fledged home computer system. While these extensions failed in the marketplace, another upgrade enjoyed some degree of popularity: the INTELLIVOICE speech synthesis module.

Equipped with this add-on, the INTELLIVISION was capable of producing intelligible speech – a premiere in home computing. Now the player could concentrate on the on-screen action without having to constantly monitor energy or shield levels, which, if critical, would be brought to the player's attention by a computer-synthesized voice. The feature was used in a few games released by Mattel, but, after an initial hype, interest in the INTELLIVOICE quickly faded. It was reasoned that, although speech was considered a valuable quality in a game, people were not willing to pay extra money for a module.

|

| |||

|

|  | |

|

With Namco's PAC-MAN, the year 1980 saw the release of what would become known as “the most popular arcade video game ever”. Trapped inside a maze, the famous dot-eating Pac-Man ![]() is hunted by four ghosts

is hunted by four ghosts ![]() , each following a different strategy. This simple, yet intriguing setup caused players to flock to the machines – for the first time, people had to actually stand in line and wait for their turn on the joystick. Like SPACE INVADERS before, the game quickly became the major attraction in arcade halls all over the world, only on a much wider scale. Its immense popularity with both, men and women – another novelty – lead to the game pulling in four million quarters within the first twelve months, thus being rumored to have caused a Yen shortage in Japan. With more than 300,000 original machines sold worldwide, counterfeit units were an attractive business – it is estimated that for each genuine system one unlicensed clone was manufactured.

, each following a different strategy. This simple, yet intriguing setup caused players to flock to the machines – for the first time, people had to actually stand in line and wait for their turn on the joystick. Like SPACE INVADERS before, the game quickly became the major attraction in arcade halls all over the world, only on a much wider scale. Its immense popularity with both, men and women – another novelty – lead to the game pulling in four million quarters within the first twelve months, thus being rumored to have caused a Yen shortage in Japan. With more than 300,000 original machines sold worldwide, counterfeit units were an attractive business – it is estimated that for each genuine system one unlicensed clone was manufactured.

Due to the frequent playing of the game, expert players evolved who had discovered certain patterns that would allow them to avoid the ghosts ![]() . As a result of this, players started spending less money on the game and playing insanely long games on one quarter, which upset many arcade owners. In response to this, the manufacturers provided different chipsets to be put inside the machine to modify the old ghost

. As a result of this, players started spending less money on the game and playing insanely long games on one quarter, which upset many arcade owners. In response to this, the manufacturers provided different chipsets to be put inside the machine to modify the old ghost ![]() patterns so that the players' strategies would no longer work. Of course, the players would adapt to the new situation after a short time... Some masters of the game could even tell which PAC-MAN variant was running on a given machine after watching the game for a while.

patterns so that the players' strategies would no longer work. Of course, the players would adapt to the new situation after a short time... Some masters of the game could even tell which PAC-MAN variant was running on a given machine after watching the game for a while.

| |||

|

|  | |

|

Nonetheless, PAC-MAN did exceedingly well and there were many consequences of that for businesses. For the first time, it seemed like a video game was something that could be made marketable like a cartoon, and from 1981 to 1983, tons of PAC-MAN paraphernalia were produced. The products included large amounts of clothing, bedspreads, bumper stickers ![]() , mugs, dolls, grammar school products, towels, bags, pins, and so on.

, mugs, dolls, grammar school products, towels, bags, pins, and so on.

It was not until 1982 that ATARI released the highly anticipated home version of PAC-MAN. While the version for the ATARI 400/800 home computers (see below) and the 5200 console was considered one of the best arcade translation ever made, the version for the more popular 2600, however, was deemed a horrible failure. The game did not resemble the popular arcade version at all and many people became disenchanted with the company feeling they had been somewhat deceived. Still, the game was a success since it was simply a “must-have” item for the 2600 owner.

|

| ||||||||||||

|

|  | ||||||||||

| ||||||||||||

When the COLECOVISION was released in 1982 as “third generation” home video game system, it was the system to get. The manufacturer Coleco (a contraction of Connecticut Leather Company) had equipped their cartridge-based video game with state-of-the-art electronics and a fair amount of memory resulting in superior graphics and sound. The console was initially shipped with an adaption of Nintendo's 1981 arcade smash-hit DONKEY KONG – which is believed to be one of the major reasons for its success. It topped sales charts in 1983, beating both ATARI and Mattel. The COLECOVISION was driven by a 3.58 Mhz 8-bit Z-80A microprocessor and furnished with a SN76489AN sound chip by Texas Instruments.

Like the General Instruments sound chip used in Mattel's INTELLIVISION, the Texas Instruments counterpart inside the COLECOVISION was capable of producing three voices and an additional noise channel. The TI chip, however, was less sophisticated in that respect, allowing only for control of pitch and attenuation. To obtain the desired pitch, a base frequency of 3.579MHz was divided by the 32-fold of a given 10-bit value resulting in 1024 possible pitches, not all of which were useful. The volume was controlled via a 4-bit register which influenced the sound's attenuation from 0 to 28dB in 2dB steps.

The Expansion Module #1 also known as ATARI adpapter made it possible to play all ATARI 2600 games on the COLECOVISION for a mere $60. Released in 1982, the module was practically a complete re-design of the VCS, lacking only the power-pack and the RF-modulator. Through this feat, the COLECOVISION gained access to the largest pool of game titles in existance. Of course, ATARI did not approve of this and sued Coleco for $350 million, charging patent infringement. In return, Coleco filed a $500 million counter-suit alleging violations of Federal Antitrust laws. After a few months, the two companies settled out of court with an agreement that Coleco would pay royalties on the adapter.

HOME COMPUTERS | 4 |

Since the late 70s, another chain of computerized devices had been developing alongside the arcade and home video games that has so far been neglected in this article – the home computer.

|

In 1975, the MITS Altair 8800 computer kit had been made available through mail-order for $397. Thanks to its reasonable price, it was one of the first computer to get into people's homes. However, the Altair's practical usability was relatively limited. Its design reminded of a mid-1960s computer – pretty much like the ones commonly seen in the popular “Star Trek” science-fiction TV series: data input was accomplished through switches and a number of LEDs on the box handled all output. Incidentally, the Altair was given its name after an episode of the very same TV series.

|

| |||

|

|  | |

|

The so-called “home computer” came into existence when Steven Paul Jobs and Steve Wozniak created the Apple I computer mostly from parts that Jobs had “borrowed” while working for ATARI. From July 1976, the device was sold to hobbyists and electronics enthusiasts in kit form through local stores for $666.66. It featured a MOS 6502 processor, 4KB memory, QWERTY-keyboard and 40x25 text graphics.

Though only a moderate success with about 200 units sold in total, the Apple I paved the way for the much improved Apple ][ computer. Equipped with revolutionary colour graphics, no less than eight expansion slots, a decent QWERTY-keyboard and game paddles, the computer entered the market in June 1977 at $1,300. The system was also capable of producing sound, albeit limited to simple clicks. Only through some very clever programming it was possible to create tones by turning the speaker on and off in quick sequence. However, this technique was rarely used in real-life applications with the noteable exception of the two games DUNG BEETLES and SEA DRAGON that demonstrated what was possible with the limited resources.

|

| |||

|

|  | |

|

Also in 1977, another mini-computer, the PET (Personal Electronic Transactor) was introduced by Commodore. The model with 4K memory was sold for $595, the 8K variant went for $795. The PET went on to become the first mass market computer and before long it is decided to double the price for its European release since it was discovered that customers were still happily paying the higher amount. Not even the higher price hindered its immense success – customers had to wait up to six months to get hold of their PETs. A major drawback of the original PET 2001 was its keyboard, which consisted of 73 identical tiny buttons, not unlike those commonly found in pocket calculators. Other than that, the system was abundantly equipped – featuring even an internal »datasette« cassette tape drive and a 9" blue or green on black display. Later models, like the PET 2001-N and the 2001-B had a much improved true QWERTY-keyboard, 16K or 32K of memory and an internal piezo speaker but were lacking the internal datasette. The speaker was mainly used to create a right-margin-beep, like it is commonly found in conventional type-writers. A large software pool of well-programmed applications from chess-game to textprocessor quickly became available, adding to the popularity of the system. Later models of the PET were re-named CBM (for Commodore Business Machines) since PHILIPS owned the rights on the term and forbid its use by Commodore.

|

| ||||||||

|

|  | ||||||

| ||||||||

Another popular computer released in 1977 was the TRS-80 Model I manufactured by Tandy and sold through Radio Shack outlets for $599.95. More than 10,000 orders were taken within the first months of its release. Though the device did not have built-in sound, it was possible to use an attached cassette tape recorder (normally used for data storage) to produce an organ-like sound. With an additional programm called Orchestra-80, the TRS-80 was able to generate up to five individual voices for music playback. Apparently, the creation of music on the TRS-80 seems to have enjoyed tremendous popularity with its users. Today, more than 2,500 music files are available for download, along with a plugin for WinAMP to play them on the PC.

|

| ||||

|

|  | ||

|

ATARI released their first home computers in 1979. The 400 and 800 models were based on a MOS 6502 processor running at 1.79 MHz – which was roughly twice the speed of previous machines. Their initial built-in amount of memory was 8KB and 16KB, respectively, but most units sold later were equipped with 16KB or 48KB. The 400 was sold for $550, whereas the 800 went for $1,000. They both featured high-resolution graphics with up to 128 colour displayable simultaneously, as well as four-voice synthesized sound. There was an additional built-in speaker that could be used to output key-clicks and other sounds. The sound-chip called »Pokey« was used in all later ATARI 8-bit computer models as well as some ATARI arcade games like STAR WARS. Though it could only produce square-waves, it delivered the best sound available on any computer platform at the time. The pitch of each voice was controlled though 8-bit frequency registers, allowing for a range of about three octaves. However, channels could be combined to form two 16-bit frequency channels and the base-fequency for the division could be set to 15kHz, 64kHz or 1.79MHz. The volume for each channel could be controlled in 16 steps. There was no extra noise-channel, but variable distortion could be applied to each voice. By means of some very clever programming, it was also possible to play short speech patterns, albeit barely legible.

|

| |||

|

|  | |

|

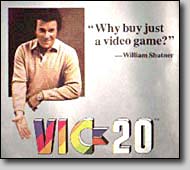

Commodore introduced the VIC-20 home computer in January 1981 for $299. It was the first home computer affordable to virtually anybody, in accordance with the motto of the founder of Commodore, Jack Tramiel: “We make computers for the masses, not for the classes.” The success confirmed the validity of this practice – more than 2.5 millions units were sold during its lifetime from 1981 to 1984. Commodore's advertising campaign featured William Shatner with the slogan: “Why buy just a video game?”

The VIC-20 represented a fairly versatile device which was initially equipped with only 5KB memory that could be easily upgraded using expansion modules. Thanks to its universal serial bus interface, a variety of periphals, like floppy disk drives or printers, could be connected directly to the VIC-20 without problems. The computer was powered by a MOS 6502B CPU running at roughly 1 MHz, graphics as well as sound were handled through a MOS 6561 chip called »VIC« (Video Interface Chip) – the heart of the design, which also gave the computer its name. The number “20” in the name represented the 20KB amount of Read-Only-Memory (ROM) installed that contained the CBM BASIC V2.0 programming language. The sound part of the VIC was not very sophisticated, it contained three voices that could only produce square-waves as well as an extra noise-channel. Pitch could be controlled through 8-bit registers in 256 steps and the volume for each channel could be modified in 16 steps.

|

| ||||||||

|

|  | ||||||

| ||||||||

After the great success of the VIC-20, Commodore introduced its successor, the C-64, in 1982. No one knew by the time, but this little fellow would go on to become the best selling home computer in history. Though its physical appearance was almost identical to the VIC-20, a great many things had changed on the inside. With a raging 64KB memory, an enhanced graphics chip, a highly sophisticated sound chip and a suggested retail price of $595, it was considered a huge value at the time. The VIC-20 was still going strong so it was decided that the C-64 should be marketed alongside it instead of replacing it, as originally planned. By the end of 1983, the VIC-20 finally began to fade and the C-64 took the lead in sales figures. By then, its street price had reached a mere $200 and more than 200,000 computers were manufactured by Commodore each month. Thanks to its modest price, customer interest in this amazing new computer began to heat up. People who had never considered buying a home computer before were suddenly finding themselves proudly carrying their brand new C-64 out of a local store. Thousands of games and other programs were written and sold on cartridges, computer tapes or floppy disks. Magazines and gazettes appeared that offered help and support and covered hardware and software developments related to the device. During its lifetime of almost 10 years, the C-64 was only slightly modified, with a major re-design in 1986, when the C-64 II was introduced by Commodore. The device enjoyed an unparalleled popularity, with an estimated total of 20-25 million units sold worldwide between 1982 and 1992.

| ||||||||||||||||||

|

|  | ||||||||||||||||

| ||||||||||||||||||

While the graphics capabilities of the C-64 were pretty much comparable to other home computers like the ATARI 400/800 or game consoles like the INTELLIVISION or COLECOVISION, its amazing sound chip, the SID 6581, was clearly ahead of its time. It had been developed in an almost impossibly short period of time by Bob Yannes, who later co-founded the famous synthesizer company Ensoniq. In a 1986 interview he commented on the other sound chips used at the time:

“I thought the sound chips on the market (including those in the Atari computers) were primitive and obviously had been designed by people who knew nothing about music. As I said previously, I was attempting to create a synthesizer chip which could be used in professional synthesizers.”

The SID featured three voices that could be used independently or in conjunction to create complex sounds. Each voice could be programmed to generate either a triangle, sawtooth, variable pulse or noise waveform. Pitch was controlled through a 16-bit register in 65,536 steps, allowing for tone-sweeps from note to note (portamento) without any discernible frequency steps. When triggered, an envelope generator could create an amplitude envelope with variable rates of attack, decay, sustain and release. An addtitional programmable filter was provided that could be used to generate complex, dynamic tone colors. Also, it was possible to process external audio signals, allowing multiple SID chips to be daisy-chained or mixed in complex polyphonic systems.

Even today, the C-64 and the SID are still alive and kicking. Through the use of special emulator programs it is possible to run C-64 software or play SID-music on modern desktop PCs without the need to even own the real McCoy. Almost every popular program C-64 can be found on dozens of dedicated Internet sites, along with more than 14,000 songs written for the SID.

|

On Wednesday, August 12, 1981, International Business Machines (IBM) announced the IBM Personal Computer (PC) at the Waldorf-Astoria Hotel in New York City. Based on an Intel 8088 microprocessor running at a speed of 4.77MHz, the base system was equipped with 64KB RAM, a 5.25" floppy disk drive, the Microsoft Disk Operating System (MS-DOS) and had a retail price of $1,565. Loaded with options, the price could easily reach $3,000 to $6,000. The most interesting aspect of the PC was its completely modular design – components could easily be exchanged or replaced by more advanced versions. For instance, initially only a monochrome text display adaper had been available, that could later be replaced with a colour graphics model. Also, thanks to the use of standardized connectors and protocols, IBM had created an ‘open’ system, i.e. third-party-manufacturers could develop their own products for the PC system. One of the first companies to produce such an add-on was Hercules who introduced their monochrome graphics card that could also display high-resolution graphics in 1982. Even more importantly, the whole approach taken by IBM was based on readily available microchips and components, which allowed other companies to build their own PC-compatible systems, commonly known as IBM clones. COMPAQ was the first to introduce such a sytem in 1982.

Quickly, the IBM-compatible PC became the standard office computer all over the world. Due to its high price, along with the initial lack of suitability for games, it only gradually found its way into people's homes. Even then, it was mostly used for text-processing or spreadsheet calculations, being simply too expensive a toy to play with. Though IBM continued to be the driving force in PC development for years after the release of their first model, the situation had fundamentally changed by the end of the 1980s. With hundreds of different manfacturers offering compatible components, a PC system could practically be assembled by everyone with little technical experience. It was this flexibility and independence from a single company that has made the PC as popular as it is today. A history of the evolution of sound and music on the PC will be presented in Part C of this work.

VIDEOGAME CRASH | 5 |

In 1982, sales of video games and consoles had reached a giddy dimension, with more than 20 million ATARI VCS consoles sold and millions of game cartridges on the shelves. Seeing how much money could be made with relatively little effort, many companies had jumped on the video game train. It all seemed so easy: just find some programmers to create any kind of video game and get it out there in the stores. As a result, the market was virtually flooded with games, most of them being of questionable quality. Huge bargain bins of outdated or unoriginal games had become commonplace in stores, while it was increasingly difficult to find quality games the had driven the market only a few years before. Failed translations of arcade games like PAC MAN (see above) and highly promoted original games like ATARI's “E.T.” that had not met the public's expectations did not help the situation.

In addition, home computers were beginning to get very popular – by 1984 they had chipped a large dent into the videogame market. Not only could games be played on them in excellent quality but they could also be used for many other tasks, like text processing or calculation. It was even possible to create your own programs, which was a lot of fun to do and something many people did, assisted by dedicated computer magazines. With their low price and considerably higher value thanks to their versatility, they posed a real threat to game consoles that had ruled the video game market since 1977.

With far too many companies in the business, declining sales in both, video games and console hardware, the whole U.S. video game market ultimately collapsed in 1984. Instead of a gradual transition with sales slowly ebbing away, it seemed as if all at once people had decided not to buy consoles and games anymore. Many companies went broke and some major players had to either file for bankruptcy a few years later, like Coleco, or give up their entire video game branch, like Mattel was forced to do. Even ATARI was barely clinging on, largely thanks to their arcade and home computer business, but never entirely recovered.

|

Arcade games, on the other hand, were still doing well, as was the video game market in Japan. There, Nintendo had just released the FAMICOM (short for Family Computer) game console for $100. The device had taken the market by storm and quickly pushed other consoles toward the verge of insignificance. Originally, Nintendo had not planned to release the FAMICOM in the United States. However, after the U.S. video game market had broken down, Nintendo's managing director, Hiroshi Yamauchi, decided to reconsider that decision. Yamauchi had already realized that the success of a video console was largely dependent on the quality of the available games for the device and correctly attributed the U.S. breakdown to the players' frustration with the huge amounts of low-quality software and not the lack of interest in video games as such. In Japan, Nintendo was rigorously controlling game software, they had set up programming teams that competed with each other and only the best games were released. Games created by third-party manufacturers required a licence, which, in turn, provided Nintendo with a considerable share of sales profits of these games. Thanks to a so-called lockout-chip inside the FAMICOM, only cartridges manufactured and thus approved of by Nintendo could be used. This practise remained intact until 1988 when Tengen, an ATARI subsidiary, found a way to bypass the chip and started producing their own cartridges.

| ||||||||||

|

|  | ||||||||

| ||||||||||

For the christmas season of 1985, Nintendo had successfully convinced a few major U.S. chains to offer the FAMICOM. To support the U.S. release, the console was re-designed to offer a more serious appearance and all terms that could somehow remind dealers of the disastrous 1984 crash were stricly avoided. Instead of »joystick« or »controller«, the new phrase »control deck« was used, »game cartridge« had become »game pack« and to erase all memories of a »video game«, the FAMICOM was re-named NINTENDO ENTERTAINMENT SYSTEM (NES) for its U.S. launch – which is also name it has become famous with troughout the rest of the world. U.S. sales started slowly, but rapidly gained in momentum each following year: one million units in 1986, three million in 1987, seven million in 1988. By 1989, a NES console was already found in every fourth American household, one year later in every third. More than 62 million consoles and 350 million games were sold until 1994, when Nintendo officially discontinued the product in favour of their 16-bit console (the SNES, see below) released three years previously.

The heart of the NES was a MOS 6502 processor running at 1.79 MHz, exactly as found inside the ATARI 400 and 800 home computers. Graphics could be displayed with a resolution of 256x224 in 52 colours, half of which could be displayed simultaneously. In contrast to all other contemporary devices, the NES did not contain a special soundchip. Instead, sound output was realized solely through analog circuitry. A total of five channels was available: two square voices, one triangle voice, a noise channel and a digital channel offering the possibility to play digitized sounds. The latter represented quite a novelty for home devices – before, it had only been possible to playback digitized sounds using clever programming tricks or special add-on modules such as Mattel's INTELLIVOICE. The original FAMICOM was missing this fifth sound channel and was therefore unable to reproduce digitized sounds. However, the feature was only scarcely used in NES games, since digitized sound data required large amounts of storage, which was simply not available in ROM game cartridges, or only at a high a expense.

The SEGA MASTER SYSTEM was released in 1986 to compete with the NES. Though technically slightly superior, it was unable to grasp a significant portion of the market. Pretty much the same happened with the ATARI 7800 that was finally launched in the same year, having been withheld since the crash of 1984. Unlike the ill-fated ATARI 5200 released in 1982 – essentially a stripped down version of the ATARI 400 home computer without the keyboard – the 7800 offered out-of-the-box compatibility with existing 2600 game cartridges. Both, the SEGA and ATARI units came too late to cause any serious competition for Nintendo who was enjoying an increasingly firm grip on the market, outselling the others by 10 to 1.

|

An important milestone related to sound and music was marked by the release of the Commodore AMIGA 1000 home computer in 1985. The device had been developed by Jay Miner, a former ATARI employee who had also headed up the chipset design of the 2600 video game. His own company, Amiga Corporation, had since been taken over by Commodore in 1984 who released the product under their own label one year later. The AMIGA featured a revolutionary design based on custom chips – also dubbed co-processors, with such colourful names as Agnes (memory access, graphics and animation), Paula (sound, I/O) and Denise (display processor). The device was powered by a Motorola 68000 16-bit processor running at the incredible speed of 7.16 MHz compared to the average MOS 6502 8-bit CPU of existing home computers crawling along at 1 or 2 MHz. Marketed at an inital $1,300, the AMIGA 1000 was equipped with 256KB memory, expandable to 512KB internally and 8MB externally. It had a built-in 3.5" floppy disk drive, a detachable 89-key keyboard, a mouse and came with bundled software, which included the AmigaDOS “Workbench” graphical operating system, the AmigaBASIC programming language, a tutorial and a voice synthesis program.

| ||||||||||||||||||

|

|  | ||||||||||||||||

| ||||||||||||||||||

Despite its technical advantages, the success of the very first AMIGA was fairly limited owing to its price that was simply too expensive for the consumer market. However, its successors, the AMIGA 500 and 2000 enjoyed considerable popularity, especially in Europe. While the AMIGA 2000 had been developed as a high-end device with 1MB memory, an external keyboard, and a desktop case that offered convenient expansibility through numerous extension slots and extra drive-bays, the AMIGA 500 was targeted at the mass market and came in a wedge-like all-in-one case that was lacking some of the upgrade options and housed only half the memory. Commodore's policy worked out and the A500 was the machine that finally brought the world's attention to the AMIGA.

The reason why the AMIGA deserves a special place in this timeline is its sound chip, Paula. Instead of the sound synthesizer chips with varying numbers of channels, wave forms and other features found in all video game consoles and home computers at the time, the AMIGA was capabled of reproducing four channels of digitized sound with a resolution of 8 bits. Only the NES (see above) had previously offered anything in this direction, albeit only with a single channel and a very limited practical value. The AMIGA even featured stereo sound with two channels assigned to the left stereo output and the other two to the right. Built-in hardware support for amplitude as well as frequency modulation of the digitized sound allowed for various interesting and useful effects, such as vibrato or enveloping. Music was composed using digitized samples of instruments that were played back at different speed (resulting in changing pitches) through the four available channels. A program that let the user create such compositions with ease was called »tracker«, while the resulting pieces were stored in a roughly standardized file format called »module«, or »MOD« in short.

Thanks to the utilization of digitized natural sounds, it was possible to create music that for the first time closely resembled its real-world counterpart. The kind of sounds that could be created was no longer limited by the capabilities of a given synthesizer chip, practically every sound that existed could be digitized and used. Other contemporary 16-bit home computers, like the ATARI 520ST, were still equipped with synthesizer chips delivering mono sound and could not compete with their AMIGA counterpart in that respect. The ATARI ST, however, featured a built-in MIDI port that made the device very popular among musicians, who could easily control their external synthesizers, samplers, sequencers and other equipment using special software running on the machine.

Aside from the positive impact the AMIGA's support for digitized sound had on computer music, games were also greatly benefitting from it in their own domain. Where there had only been remote resemblances of natural sound before, it was suddenly possible to use actual data sampled from a real-world event or created by whatever means came to the sound designer's mind. A brave new world had dawned upon game sound, making it much more realistic than ever heard before and limited in fidelity only by the storage capacity of the media the game was delivered on – a 3.5" floppy disk with a capacity of 880KB, in the case of the AMIGA.

The AMIGA continued to prosper with enhanced models released in later years. Especially the A600 and A1200 released in 1991 to replace the A500 were quite successful. The A2000 was, in turn, replace by the A3000 and A4000 models. The new machines were generally more powerful and included a better graphics chips, though sound capabilities remained the same. However, by some strange turn of events, the whole AMIGA home computer series had always been treated by Commodore like a stepchild and was never properly marketed. Given the great technical potential and attractive pricing, the AMIGA should have really blown away the competition. But apparently Commodore never truly believed in the device and without the proper marketing, the AMIGA failed to catch on as rapidly as it could have done, ultimately dying in the mid 1990s facing the growing competition by IBM PCs and eventually leading to Commodore's own downfall. AMIGA patents and other intellectual property were liquidated by Commodore's trustees in bankruptcy and went to ESCOM, who also went bankrupt, and then to GATEWAY with most of them finally ending up in the hands of Amiga Corporation. The latter is currently working to release new AMIGA models, which will probably have little in common with their ancestors but innovative hardware and software, if the statements on their website are to be believed.

NEW CONSOLES | 6 |

In the late 1980s, numerous game consoles were released that offered the enhanced capabilities, with higher speeds, better graphics and improved sound. The following paragraphs, while certainly not complete, contain a run-down of the most important events.

|

| |||

|

|  | |

|

The NEC TURBOGRAFX-16 (known as PC ENGINE in Japan) was released in 1987 and marketed as the first 16-bit console. This, however, had been a little exaggerated, since only the graphics chip of the unit was really a 16-bit design, while its main processor was still an 8-bit chip. The TURBOGRAFX featured an unprecedented six channels of digitized sound, as well as two additional channels supporting hardware playback of ADPCM-compressed sounds (see below) and stereo output.

Most notably, however, the TURBOGRAFX was the first console that could be expanded with a CD-ROM drive attachement. Compared to a typical 2MBit ROM game cartridge commonly used at the time, CD-ROMs offered a huge amounts of storage space – the contents of about 2,000 of these modules could be easily stored on a single disc. Suddenly, game designers were no longer limited by the drastic constraints that had previously been imposed on them. Over the years, most of them had learned to make the most of the little space available inside game cartridges, so when they were suddenly confronted with the vast capacity of the CD-ROM, they simply did not know what to do with it. It would take some time for them to adapt to the new situation. One thing that immediately sprang to mind was to use the extra space to store better and more realistic game music and sounds. Since the CD-ROM drive was just an add-on to the cartridge based TURBOGRAFX, the first games released on CD-ROM were simply enhanced versions of their cartridge counterpart with added sound.

The TURBOGRAFX system gained a fair level of popularity, but failed to amass a large crowd of followers in the important U.S. market – looking back, it would seem that NEC has ended up being a pioneer and innovator rather than a huge success in the video game business.

|

| |||

|

|  | |

|

In 1989, the Nintendo GAMEBOY was released at an initial price of $100. The small and lightweigt portable device featured nice graphics on a 2.5" monochrome display with a resolution of 160x144 pixels and quickly became popular among both, adults and kids – about one million units were sold in the year of its release alone. A bundled version of the simplistic but extremely addictive game TETRIS created by the Russian student Alexey Pajitnov further helped the success of the system. The GAMEBOY featured 4-channel stereo sound, with three channels being driven by a programmable sound generator (PSG) and the fourth channel offering 4-bit digitized sound. There was also the possibility to pass through an external audio signal, which would theoretically allow for a more sophisticated sound chip inside the cartridge to transmit its signal output into the unit. The projected MP3-player add-on for the GAMEBOY would work this way.

| |||

|

|  | |

|

The original version of the device was revised in 1996 with the GAMEBOY POCKET, which was basically a re-design to make the unit even smaller and include a sharper display. As interest in the tiny companion began to slowly fade away once again, Nintendo released the much improved GAMEBOY COLOUR in 1998. The unit offered a faster processor and a crisp TFT display that was capable of simultaneously displaying 52 colours out of a possible 32,000 but was still missing a backlight, the lack of which had been seen by many gamers as the most serious nuisance of the entire range of GAMEBOY models. Nonetheless, the colour version was a great success, assisted by the fact that Nintendo had taken great care to ensure full backwards-compatibility with all previous GAMEBOY designs. Despite graphical improvements, sound capabilities have remained largely unaltered from the first model – something that is going to change when Nintendo's latest development in portable video games, the GAMEBOY ADVANCE will be released in 2001. In addition to four channels of PSG-powered sounds, a special processor will allow for up to 32 digital voices to be downmixed to the two stereo channels in realtime. Resembling a completely new design, the GAMEBOY ADVANCE is still expected to be fully backwards-compatible thanks to an intergrated chip that is essentially a complete re-do of an entire GAMEBOY COLOUR.

As of now, the GAMEBOY still continues to prosper unremittingly. Thanks to new exciting developments just around the corner, there's no end of this in sight. Worldwide sales of 100 million units by June 2000 and a library of more than 1,000 games make the GAMEBOY the most successful video game of all times.

|

| ||||||

|

|  | ||||

|

The first real 16-bit console was released in 1989 by SEGA for $199. The MEGA DRIVE was introduced in the U.S. under the name GENESIS and was basically a re-design of an existing 16-bit arcade machine based on a Motorola 68000 processor running at 8MHz. The system featured nice graphics in a resolution of 320x224 pixels using a maximum of 64 simultaneous out of a possible 512. Thanks to its arcade heritage, the sound system was pretty impressive: a dedicated Z80 processor running at 4MHz controlled one PSG (a Texas Instruments SN76489AN, same as in the COLECOVISION, see above), one very advanced Yamaha FM synthesizer chip (YM 2612, 6 voices with 4 operators each, also used in the Yamaha DX27 and DX100 keyboards) and 6 channels of digitized sound which were all sent to stereo outputs. The Z80 served a dual purpose, since it also provided backward-compatibility with games desgined for the older SEGA MASTER SYSTEM, that would use it as main processor with the 68000 left basically inactive.

Upon release, the MEGA DRIVE had to compete with the NES and the TURBOGRAFX-16. Thanks to SEGA's agressive marketing, the unit eventually left the latter behind and it was soon realized by gamers that the system was a lot more powerful than the NES, which resulted in it being seen as something new, rather than just another NES rival. The MEGA DRIVE went on to become quite successfull, with a base of over 700 games and an estimated 45 million units sold so far. The system is apparently still being produced by Majesco Sales and sells for less than $30 with annual sales of more than 600,000 units.

In 1991, SEGA released the SEGA CD, a CD-ROM add-on for the MEGA DRIVE, mainly to strike at NEC's CD-ROM extension to the TURBOGRAFX and marketed at an initial $299. In contrast to NEC's version, it enhanced the MEGA DRIVE's capabilities by including an additional Motorola 68000 processor running at 12.5MHz, 6MBit of memory, 512KBit of dedicated sound RAM, as well as special hardware chips offering eight extra sound channels in stereo and playback of compressed full motion video (FMV). Despite the advanced features and good quality of games, the SEGA CD became a only moderate success, with about 140 games produced and wordwide sales of an estimated 3 million units.

|

| |||

|

|  | |

|

The LYNX was the first hand-held colour video game, released in 1989 by ATARI with a retail price if $149. Originally developed by Epyx in 1987, the system featured a 3.5" colour LCD that was capable of simultaneously displaying 16 colours out of a possible 4096 at a resolution of 160x102 pixels. Two powerful integrated CMOS chips, prominently called »Mikey« and »Suzy«, contained all the necessary functions of the LYNX. This fact makes it only too obvious that the system was designed by Dave Needle and R.J. Mical, who had also been members of the Amiga design team. They did not forget to include an enhanced version of the AMIGA's sound circuit, offering 4 channels of 8-bit digital sound routed to a stereo output with the additional possibility to use variable left-right panning on each channel. Unfortunately, in spite of its great capabilities and attractive pricing, the unit failed to catch on in the market – probably because ATARI already had a bad name with many potential buyers, seeing that they had not had any significant success in the video game business since the crash of 1984.

In 1990, a hand-held version of NEC's TURBOGRAFX-16 was released as TURBOGRAFX EXPRESS. The device was nearly identical to its console brother, only drastically reduced in size and equipped with a colour active matrix LCD. Virtually all of the games available for the TURBOGRAFX-16 could be played on the tiny technical masterpiece without reservation. In addition, a TV tuner was available for the unit that would allow to watch TV programmes on the tiny display in excellent quality. Due to poor marketing and its high price the TURBOGRAFX EXPRESS was even less successful than ATARI's LYNX. Nonetheless, it was clearly ahead of its time.

|

| ||||||||||

|

|  | ||||||||

| ||||||||||

Facing increasing competition from SEGA's MEGA DRIVE, Nintendo decided to create their own 16-bit system. They had hesitated for a long time because of their overconfidence in the NES, which had cost them a valuable portion of the videogame market. In 1991, after a phase of ambitious development, they were finally ready to release the cartridge-based console they had named SUPER FAMICOM in Japan, or SUPER NINTENDO ENTERTAINMENT SYSTEM (SNES) for the rest of the world. With the clear intention of taking on SEGA's popular console, the SNES had been specifically designed to surpass the MEGA DRIVE in certain aspects, most notably in the graphics department. Thus, the SNES could produce a resolution of 512x448 pixels using a selection of 256 colours from a palette of 32,768. In addition, there was a special graphics mode that would allow for large background graphics to be scaled and rotated which could be used to create impressive 3D illusions.

Despite its late arrival in the market and its incompatibility with NES game cartridges, the SNES did pretty well, especially in Japan. According to Nintendo, 46 million units have been been sold worlwide so far – a figure that is very close to the number of existing SEGA MEGA DRIVE consoles.

The sound capabilities of SNES, however, were also quite amazing – though not as obvious as the improved visual qualities. Inside the SNES a small autarkic device handled every single function related to sound and music. This sound module contained a special 8-bit CPU running at 2MHz (SONY SPC-700) with an attached 16-bit digital signal processor (DSP, also manufactured by SONY), 64KB memory and a 16-bit stereo digtal-analog converter (DAC). The DSP was capable of mixing 8 voices in realtime, at various pitches and volumes as well as stereo locations (left-right panning settings). In order to play a piece of music, a special program for the SPC-700 had to be written which would then be loaded into the sound module's internal memory upon game start and executed from there. The program, in turn, would control the DSP like a complex instrument by instructing it which samples to use from inside the cartridge and how to alter them by changing volume or pitch, performing the desired stereo-panning, applying an amplitude envelope, various filters or even an echo effect. Game composers usually created their tunes using a MIDI sequencing software running on IBM PCs or MACs. The resulting MIDI file was then handed over to the game programmers who created a program for the SPC-700 that would play the song and included the necessary samples of the desired sounds and instruments into the game cartridge. Once a universal play-routine for MIDI files had been created on the SPC-700, it could be re-used for each successive game whose music had been created by the composer using the MIDI format.

Following the great success of the GAMEBOY, Nintendo released the SUPER GAMEBOY add-on module for the SNES in 1992. In order to play a game, a GAMEBOY catridge would be inserted into this module, which, in turn, would be plugged into the cartridge slot of the SNES – much like an adapter. The SUPER GAMEBOY basically contained a complete duplicate of a real GAMEBOY, using the control signals from the SNES controllers and sending its display output into the TV set via the SNES. In addition, it contained a circuit to allow for colour output of the monochrome GAMEBOY games.

A major drawback of the SNES was its relatively slow main processor (a custom 65816 CPU running at a maximum speed of 3.6MHz) compared to the MEGA DRIVE (8MHz 68000). It is believed that Nintendo had decided to use a slower processor because the types of game popular in Japan at the time (role-playing and adventure) did not require a lot of horsepower. When it came to high-speed action games, however, the lack of speed sometimes resulted in poor performance. Thus, Nintendo started including a range of higher speed processors into the cartridges that would take some load of the main SNES processor. At least seven different chips were used over the years, the fastest of which (the SuperFX2) was a tandem of two RISC processors running at the incredible combined speed of 21MHz.

Although a CD-ROM add-on for the SNES had originally been planned by Nintendo, it was never released. For its design, Nintendo had joined forces with SONY in 1990. After various disagreements about how the unit should be designed and marketed with the two companies pushing each other around, the alliance finally broke apart when SONY announced the PLAY STATION (note the space in the name) – a CD-ROM based unit that would also be able to play SNES cartridges – in 1991, followed by Nintendo declaring their plans to team up with PHILIPS to develop their SNES CD-ROM add-on exactly one day later. Reportedly, SONY was not very happy with this turn of events and decided to develop their own CD-ROM video game console, based on the prototype they had already created.

32-BIT GAMING | 7 |

|

| |||

|

|  | |

|

With the Panasonic FZ-1, the first 32-bit console based on the 3DO design was released in 1993 for $700. It was the first CD-only video game system and offered high-quality truecolour graphics, full motion video and CD-quality stereo sound. The 3DO had been developed by a partnership of several companies, called the 3DO Company. Hardware manufacturers then licensed the rights to build their own 3DO systems, as well as software manufacturers did, if they wanted to create a game for the system. This led to some good, but also a number of incredibly bad games. Though technically interesting, its high price, as well as the lack of quality games hindered its success – only a few hundred thousand units were ever sold.

Interestingly, with the 3DO BLASTER, manufactured by CREATIVE LABS and released in 1995, an add-on card for IBM PCs existed that would allow to play 3DO titles on any 386 PC equipped with a Panasonic double-speed CD-ROM drive. Games could be played full-screen or inside a window under Microsoft Windows 3.1. I remember the product showing up in some local computer stores at the time and collecting dust, since no one knew what to do with it, considering the fact that almost no 3D0 software were available. This was probably true for large parts of the rest of the world, so the product quickly disappeared.

|

| |||

|

|  | |

|

Also in 1993, the catridge-based JAGUAR was released by ATARI as the world's first 64-bit system at an initial $250. Though some processors were still 32-bit, the most important components were built upon a 64-bit architecture and memory bus. The JAGUAR featured a radically advanced design that would not be surpassed for years. Unfortunately, all that was to no avail since upon its release, only a few games existed for the system, none if which was considered a killer. Game production for the JAGUAR went at a snail's pace since all major game manufacturers had already commited themselves to either Nintendo or SEGA at the time, which left all the work to ATARI. Even worse for ATARI, the two big guys also possessed many important game licenses. Nonetheless, a handful of interesting games eventually appeared, namely DOOM, ALIEN VS. PREDATOR and TEMPEST 2000 that saved the JAGUAR from quick and total extinction. A CD-ROM add-on that was released in 1995 for $150 along with a few CD game titles could not prevent the JAGUAR's slow but continuous downslide. A total of 125,000 units had been sold by 1995. As an interesting side-note, an audio compact disc containing the soundtrack of the popular JAGUAR game TEMPEST 2000 was released in 1994. It is believed that it was the first commercially sold soundtrack of a video game.

|

| |||

|

|  | |

|

On November 21, 1994, the SEGA SATURN was released in Japan at an inital $399 – a mere two weeks prior to its most serious rival, the SONY PLAYSTATION, hitting the shelves. About 200,000 units were sold on the very day, mainly thanks to the availability of an almost perfect arcade translation of the immensely popular game VIRTUA FIGHTER for the system. As with the SEGA MEGA DRIVE, the CD-based SATURN was effectively a modified version of a real arcade machine. It housed no less than eight processors, the most important ones being the two main 32-bit Hitachi SH2 RISC chips running at 28.6MHz, two 32-bit video processors and an 11.3MHz Motorola 68EC000 to control sound output, assisted by a 22.6MHz Yamaha FH1 digital audio signal processor. The built-in double-speed CD-ROM drive was equipped with a dedicated buffer of 512KB memory to optimize performance and minimize delays caused by seek operations. The SATURN could display a maximum resolution of 704x480 pixels in true colour (256 shades per primary colour = 16,777,216 possible colours). Since full motion video was realized through software decoding only, it was generally deemed inferiour to the quality that could be produced by SONY's PLAYSTATION, which included optimized hardware circuity for the purpose.

Though not quite as powerful as the PLAYSTATION when it came to 3D graphics rocessing, its 2D capabilities as well as its sound subsystem were quite amazing. Thanks to two dedicated processors, the latter could process 32 channels of CD-quality audio in realtime and handle 32 MIDI channels. It also contained an advanced 32-voice Yamaha FM synthesizer, which seems to have been used only in a few games, though. The output was downmixed to stereo, with the possibilty of individual panning applied to each channel. The major drawback of the SATURN's sound system was that all audio samples had to be downloaded in raw format (i.e. decompressed) into its internal audio memory buffer of 512K before they could be used. This limitation led to a shortage of space when many different sounds were needed at the same time, for example in complex fighting games. Thus, the sample rate of the sounds in questions had to be reduced in order to conserve memory, which led to a somewhat muffled or scratchy impression.

The SATURN became quite popular in Japan with sales of over 5.5 million units until it was discontinued by SEGA in 1998 in favour for their new console, the DREAMCAST (see below). It remained Japan's top-selling console for almost two years, starting with its release, until it was finally overtaken by the SONY PLAYSTATION in 1997. Due to crippling marketing mistakes in the U.S. as well as in Europe, the system fell short in these markets, with only about 2 million units sold in North America and less than 1 million in Europe.

|

| |||

|

|  | |

|

On December 3, 1994, SONY Computer Entertainment, Inc. released their new 32-bit, CD-based game console – the PLAYSTATION – in Japan with an initial retail price of ¥370,000 ($387). The U.S. launch followed in September 1995 at $299. The system was powered by an R3000A 32-bit RISC processor manufactured by LSI Logic running at 33.8MHz. The CPU was included into a custom chip that also contained the Geometry Transfer Engine (GTE), responsible for 3D graphics calculations, as well as the Data Decompression Engine (MDEC) that handled realtime decompression of high-quality full motion video and graphics. A special Graphics Processing Unit (GPU) dealt with the rendering of 3D graphics, including flat or Gouraud shading of objects, as well as texture mapping. A maximum resolution of 640x480 pixels could be displayed in true colour. The PLAYSTATION's stereo sound was created using a special chip that could process 24 channels of 16-bit sound at a sampling rate of 44.1kHz and allowed for realtime effects, such as pitch modulation, amplitude envelopes, looping an digital reverb. It also included 512KB of dedicated audio memory and direct support for MIDI instruments. In contrast to the SEGA SATURN, samples did not have to be decompressed before they could be used, which was a clear advantage considering that usually a 4:1 compression had been applied to the samples which had only little influence on sound quality. The included double-speed CD-ROM drive had a maximum capacity of 660 Megabytes and was capable of playing audio CDs.